My series dedicated to the use of SOLID principles is back, this time to talk about the interface segregation principle. For reference, here are the five principles once again.

- Single responsibility principle (SRP)

- Open / closed principle (OCP)

- Liskov substitution principle (LSP)

- Interface segregation principle (ISP)

- Dependency inversion principle (DIP)

The interface segregation principle (ISP) seems to be one of those principles that, unfortunately, is taken either too lightly or too seriously. Its logic is quite simple and appealing, but when you go out of your way to implement it, it’s probably going to drive any code reviewer crazy.

Let’s dive first into the ISP definition.

The fourth SOLID principle: Interface Segregation Principle

“Clients should not be forced upon interfaces that they do not use.”

What does this mean? It means that your interfaces should be splitted/grouped in such a logical way that clients always need the functions provided by the interface. If you achieve this, you will achieve cohesion and avoid fat interfaces. As a consequence, you’ll have a greater number of smaller interfaces, but with a cohesive logic.

Of course there may be cases where object instances are required to break this high-cohesion, but this should not be done at the interface side, but rather at the client side (e.g. by using multiple inheritance).

Natural tendencies

As a developer, I too suffer from the problem of always “building on top of”. When diving deeper into a specific problem, many times I take the (wrong) approach of “I need this, so i’ll just add a function to the interface“ to test it immediately. The wrong part is not the approach itself since this allows me to quickly deploy a feature and immediately test it to see if everything is working fine.

The problem comes when, after this, I don’t try to apply the ISP to check what can actually be splitted logically and refactor accordingly. Then, of course, you end up with a fat interface and a bunch of modules that have to implement functions that do nothing. And then, without warning, comes the nightmare of coupling.

Of course there’s the other hand: the guy that makes single function interfaces just for the sake of ISP. Please, don’t be that guy. Reviewers hate that guy. ISP is all about cohesion, and if you’re doing this to the atomic level, well, there’s no logic to be cohesive about, is there?

A simple example

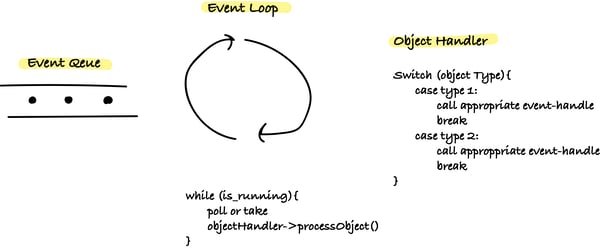

One of the examples which I like to use to illustrate the merits of the ISP is a traditional event loop whose events are consumed by multiple clients. A simple way to implement an event loop is to have a loop that uses a object handler that consumes objects (e.g. from a queue) in some way. Then, these objects are processed by an event handler.

The interface of the object handler is generally very simple, short and generic (e.g. takes generic objects as argument and calls a processObject function based on the object type). On the other hand, the interface to the event handler, although simple, is rarely short. After all, it has all the code used to handle events, and they can be many.

Now let’s assume that there are multiple modules (i.e. clients) that implement the event handler interface but only want to process certain types events. If there is a single event interface, this means that all the modules will know about / implement all the handlers, which makes no sense. For example, suppose the main loop is used to control a network application and there is a client module to handle connections and another one to send data. The event interface would implement functions to handle for example the connection establishment and sending / receiving data. But the client module for handling the connection should not be interested in knowing if a data packet has arrived or not.

Likewise, the client module for sending data should not be interested in knowing if a new connection is being established or not. And of course, your networking application will have so much more features, which means more clients and more irrelevant dependencies.So maybe the event interface is a good candidate for interface segregation. For instance, we can have an event handler dedicated for connections and another for data transfers. The respective modules would only implement the functions they actually need to, achieving high cohesion. Moreover, changing the interface means only rebuilding the client module that actually uses those functions, rather than all client modules!

What are the main benefits?

For me, the main benefits of the ISP are the following:

- Reduced coupling between different entities that use the same interface (or how you can save build time and a lot of debug pains)

- Minimizing the amount of useless code (or how you can save time from implementing functions that return 0)

- Having black-box type of code that comes with such decoupling (or how can you test your system without dependencies screwing up your life)

Remember, smaller specific interfaces are way easier to read / test / optimize than big fat interfaces and imply changes to more specific files, which means changes in less clients, which means faster build times.

Happy shrinkings!